One issue I have often come across with importing large sets of data is where we have a generated primary key, usually some sort of number, and the field is not set as being an Identity field. As much as this is questionable, in itself it is not the issue here, however when importing large sets of data into these tables (where you don’t have a requirement of preserving the key), you generally have to run through the primary key generation for each row.

The most common implementation of the custom primary key generation is to have a table of the next keys where these are retrieved and incremented to perform the insertion, generally through the use of a cursor. Whilst I feel having the generated primary key set to ‘Identity’ is a better implementation, often you have to work within the boundaries of the system presented.

cursors….run away, run away

Having come across this numerous times, I thought that I am not alone in meeting such an issue and I thought I would share my solution to this problem. Firstly for demo purposes I will set up some table variables to be our source and destination tables, I will then show the cursor based implementation, followed by the Row_Number() based solution.

Demo Set Up

The following snippet sets up the demo code for later use (full code listing will appear at the end of the post). Here we set up our bulk source (#teams) and our destination (@Team), we also have the meta table (@Identifiers) which contain the nextkey for each of the tables. (table variables have been used for demo purposes only)

--define the tables for the example

declare @tblIdentity table(

tablename varchar(50),

NextIdentityValue int

)

--teamName is surely a suitable primary key,

--but for this example lets

--use the teamID

declare @tblTeam table(

teamID int not null PRIMARY KEY,

teamName varchar(50)

)

--assume we have 33 other teams in @tblTeam

insert into @tblIdentity(tablename, NextIdentityValue)

values ('tblTeams', 34)

--pre load a team into @tblTeam

insert into @tblTeam(teamID, teamName)

values(33, 'Sydney FC')

--Load up a temp table with our 'load file'

select

'Central Coast Mariners' TeamName

into #teams

insert into #teams(TeamName)

values ('Wellington Phoenix'),

('Melbourne Victory'),

('Adelaide United')

1.1 Demo setup

Cursor Implementation

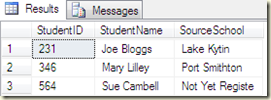

To perform the update using a cursor, we retrieve the next identifier from @tblIdentity, define the cursor and perform the inserts one at a time as we enumerate through the cursor. Finally we update the identifier table with the last value of @nextID and clean up.

declare @nextID as integer,

@nextTeam as varchar(50)

select

@nextID = NextIdentityValue

from

@tblIdentity

where

tablename = 'tblTeams'

declare bulkCrs Cursor for select TeamName from #teams

open bulkCrs

fetch next from bulkCrs into @nextTeam

while @@FETCH_STATUS = 0

begin

insert into @tblTeam(teamID, teamName)

values (@nextID, @nextTeam)

--update the next identifier varaible

set @nextID = @nextID + 1

fetch next from bulkCrs into @nextTeam

end

close bulkcrs

deallocate bulkcrs

drop table #teams

--now update the table of identifiers to reflect the change we have made

update

@tblIdentity

set

NextIdentityValue = @nextID

where

tablename = 'tblTeams'

1.2 Cursor example

Whilst the use of cursors might be acceptable for 4 rows, if this was a truly bulk insert of millions you can see how the cursor solution would not scale very well. What we need is the use of a single, set based operation to replace the cursor and this is where the usage of row_number comes into play.

Row_Number() Solution

Row_Number() is a tsql built in ranking function which, surprisingly, returns the sequential row number that a row, within a set, sits at, based upon the partitioning and ordering clauses that we set. Essentially it gives our set order and labels that order for our own means. In this case, we want the row numbering to be unique, as it will be added to the next id, so we will ignore the ‘partition by’ clause and just utilise the ‘order by’ clause.

This demo uses the same set up as in listing 1.1 above but differs where it would have hit the cursor in listing 1.2.

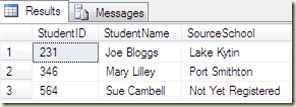

Listing 1.3, below, is my preferred method around this issue as it is able to perform the insert in a single, set based method without the need to resort to cursors. The initial set up and retrieval of the next ID are the same as the cursor based operation but the insert into @tblTeam is a lot cleaner and is performed in one step.

Row_Number() , provides us with a means for determining a key for the insert and when added to @nextID it becomes the sequential set matching the tail of our target table. In this case, the Row_Number() ordering is determined by the ‘Order By’ clause on TeamName, as the row number returned is sequential and unique even when duplicate values in the ‘Order By’ clause exist. Although it does not matter which column the order by is applied to, for consistency it is best to apply this clause to the same order that the data would have been inserted through your application if applicable.

Following the insert, we must update the identifier table to reflect the new entries in the Team table. The use of @@RowCount provides this mechanism with which we determine how many rows where inserted into @TblTeam and hence, the method for updating @tblIdentity. One catch with this, is that the use of @@RowCount must be the very next step in the process as this variable will reflect only the previously executed statement.

declare @nextID as integer,

@nextTeam as varchar(50)

--we still need to retrieve the next identityID from our identifier table

select

@nextID = NextIdentityValue

from

@tblIdentity

where

tablename = 'tblTeams'

--now do the insert in one, set based, operation through

--the help of row_number()

insert into

@tblTeam(teamID, teamName)

select

ROW_NUMBER() over (order by teamName) + @nextID - 1,

TeamName

from

#Teams

--similarly we must also update the identifier table

--at the end of the process as the underlying business

--logic expects it to be in sync

update

@tblIdentity

set

NextIdentityValue = @nextID + @@ROWCOUNT

where

tablename = 'tblTeams'

1.3 Row_Number() example

…and there we have it, the need for a cursor has been removed and we can be comfortable that the single, set based, insert will scale to meet much larger datasets.

Full code listings:

Cursor based bulk insert

--define the tables for the example

declare @tblIdentity table(

tablename varchar(50),

NextIdentityValue int

)

--teamName is surely a suitable primary key,

--but for this example lets

--use the teamID

declare @tblTeam table(

teamID int not null PRIMARY KEY,

teamName varchar(50)

)

--assume we have 33 other teams in @tblTeam

insert into @tblIdentity(tablename, NextIdentityValue)

values ('tblTeams', 34)

--pre load a team into @tblTeam

insert into @tblTeam(teamID, teamName)

values(33, 'Sydney FC')

--Load up a temp table with our 'load file'

select

'Central Coast Mariners' TeamName

into #teams

insert into #teams(TeamName)

values ('Wellington Phoenix'),

('Melbourne Victory'),

('Adelaide United')

declare @nextID as integer,

@nextTeam as varchar(50)

select

@nextID = NextIdentityValue

from

@tblIdentity

where

tablename = 'tblTeams'

declare bulkCrs Cursor for select TeamName from #teams

open bulkCrs

fetch next from bulkCrs into @nextTeam

while @@FETCH_STATUS = 0

begin

insert into @tblTeam(teamID, teamName)

values (@nextID, @nextTeam)

--update the next identifier varaible

set @nextID = @nextID + 1

fetch next from bulkCrs into @nextTeam

end

close bulkcrs

deallocate bulkcrs

drop table #teams

--now update the table of identifiers to reflect the change we have made

update

@tblIdentity

set

NextIdentityValue = @nextID

where

tablename = 'tblTeams'

select

teamID, teamName

from

@tblTeam

Row_Number based bulk insert

--define the tables for the example

declare @tblIdentity table(

tablename varchar(50),

NextIdentityValue int

)

--teamName is surely a suitable primary key, but for this example lets

--use the teamID

declare @tblTeam table(

teamID int not null PRIMARY KEY,

teamName varchar(50)

)

--assume we have 33 other teams in @tblTeam

insert into @tblIdentity(tablename, NextIdentityValue)

values ('tblTeams', 34)

--pre load a team into @tblTeam

insert into @tblTeam(teamID, teamName)

values(33, 'Sydney FC')

--Load up a temp table with our 'load file'

select

'Central Coast Mariners' TeamName

into #teams

insert into #teams(TeamName)

values ('Wellington Phoenix'),

('Melbourne Victory'),

('Adelaide United')

declare @nextID as integer,

@nextTeam as varchar(50)

--we still need to retrieve the next identityID from our identifier table

select

@nextID = NextIdentityValue

from

@tblIdentity

where

tablename = 'tblTeams'

--now do the insert in one, set based, operation through

--the help of row_number()

insert into

@tblTeam(teamID, teamName)

select

ROW_NUMBER() over (order by teamName) + @nextID - 1,

TeamName

from

#Teams

--similarly we must also update the identier table

--at the end of the process as the underlying business

--logic expects it to be in sync

update

@tblIdentity

set

NextIdentityValue = @nextID + @@ROWCOUNT

where

tablename = 'tblTeams'

drop table #teams

select

teamID, teamName

from

@tblTeam

select * from @tblIdentity

Links

Possible Enhancements

- Join with the identity table in the Row_Number based insert to remove the extra step of retrieving the key

- Utilise try…catch and transactions in order to make the bulk insert and the subsequent update of the identity table an atomic operation